Prerequirements:

In the post From integer numbers to real numbers we saw how, by means of the foundamental theorem of calculus, it’s possible to transform the difference between the values assumed by a function f defined on a set of integer numbers, that is f(b) - f(a), into the integral of an extension if it of class C^1, \int_a^b \overset{\sim}{f}^{\prime}(t) dt.

An analogue operation is also possible with a different technique, by applying some properties of integrals, when the extension is monotonous (i.e. increasing or decreasing). The advantage is again the possibility of using the tools of real analysis for studying functions originally defined on integer numbers. We’ll apply this technique to a logarithmic function, obtaining a lemma that will turn out to be useful in the future.

Upper and lower bounds for the value assumed by a function defined on integer numbers, by means of integrals of an extension of it

Let’s consider a real valued function f, defined on a set I \subset \mathbb{Z}, and an extension of it \overset{\sim}{f} defined on \overline{I} = \bigcup_{n \in I} [n, n+1) (see Definition N.9).

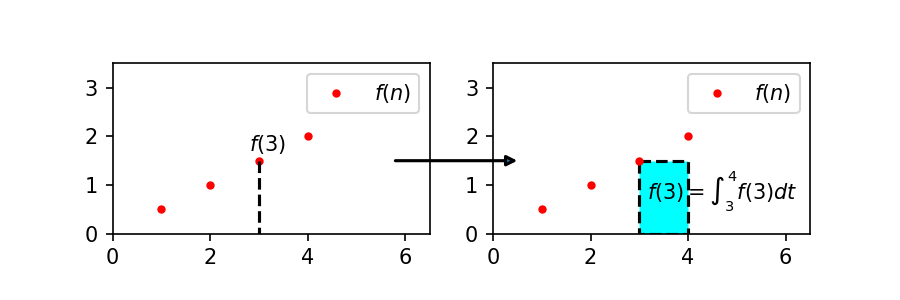

For all n \in I, we can see that the number f(n), assuming it to be positive for the sake of simplicity, is equal to the area of a rectangle with base 1 and height f(n), that in turn is equal to the integral of the constant function f(n) from n to n + 1:

An example is shown in the following Figure:

We obtained equality (1) by using the intuitive concept of area, however the formula is easily provable by formal methods.

Remembering that the primitive of a constant function c is the function c t in the variable t, for c := f(n) we have that

that is just (1).

But, by definition of extension, f(n) = \overset{\sim}{f}(n), so (1) can be rewritten in the following way:

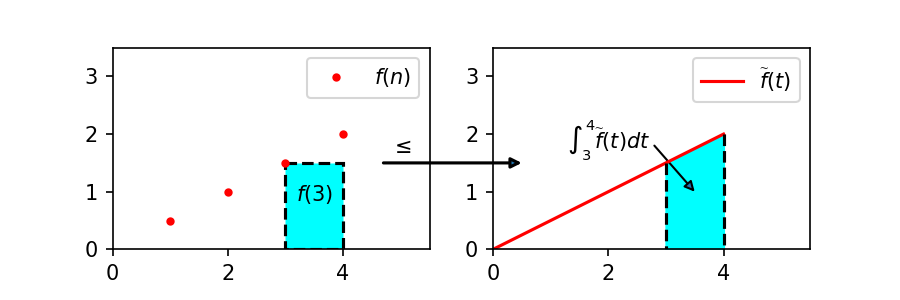

If in addition \overset{\sim}{f} is increasing, so that \overset{\sim}{f}(n) \leq \overset{\sim}{f}(t) for all t \in [n, n + 1], the integral of (2) can be overestimated as follows:

This kind of upper bound is often used in number theory, as we’ll see.

Figure 2 shows graphically equation (3), in the case of functions with non-negative values (in the case of function with negative values, (3) is still true, but it becomes more difficult to see it, due to the presence of negative areas):

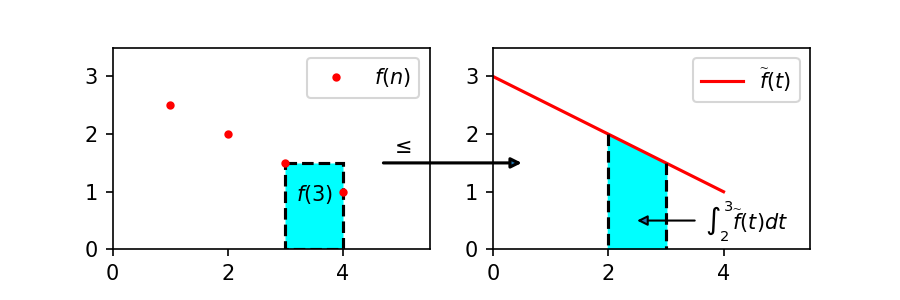

If instead the extension is decreasing, we can proceed in a similar way, taking as integration interval [n - 1, n] in place of [n, n + 1]. In this case, the extension \overset{\sim}{f} must be defined on \underline{I} := \bigcup_{n \in I} (n - 1, n] in place of \overline{I} (in order to be rigorous, we should introduce a new definition of extension, identical to Definition N.9 except for the use of \underline{I} in place of \overline{I}). In this way the following inequality, depicted in Figure 3, can be obtained:

In an analogue way we can find a lower bound for f(n), by integrating on the opposite side, that is on the left for increasing extensions and on the right for decreasing ones. So we can obtain the following Property:

Upper and lower bounds for the value assumed by a function defined on integer numbers, by means of integrals of an extension

Let f: I \rightarrow \mathbb{R} be a function defined on a set I \subset \mathbb{Z}.

Let \overset{\sim}{f}: \overline{I} \rightarrow \mathbb{R} be an extension of f, where \overline{I} := \bigcup_{n \in I} [n, n + 1). Then:

- If \overset{\sim}{f} is increasing, then

f(n) \leq \int_n^{n+1} \overset{\sim}{f}(t) dt \tag{4}

- If \overset{\sim}{f} is decreasing, then

f(n) \geq \int_n^{n+1} \overset{\sim}{f}(t) dt \tag{5}

Let \underset{\sim}{f}: \underline{I} \rightarrow \mathbb{R} be an extension of f, where \underline{I} := \bigcup_{n \in I} (n - 1, n]. Then:

- If \underset{\sim}{f} is increasing, then

\int_{n-1}^n \underset{\sim}{f}(t) dt \leq f(n) \tag{6}

- If \underset{\sim}{f} is decreasing, then

\int_{n-1}^n \underset{\sim}{f}(t) dt \geq f(n) \tag{7}

Application to the function \log \left(\frac{x}{n} \right) in the variable n

Given a fixed positive integer x, let’s apply Property N.4 to the function g_x: \{1, \ldots, x\} \rightarrow \mathbb{R} such that, for all n \in \{1, \ldots, x\}:

In order to apply Property N.4, we choose the most “natural” extension of g_x, i.e. the function \overset{\sim}{g_x}: (0, +\infty) \rightarrow \mathbb{R} such that, for all t \in (0, +\infty):

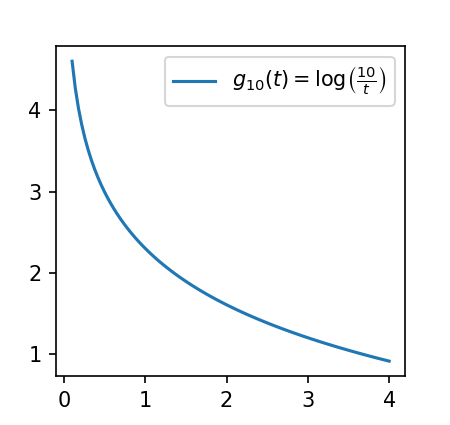

For better understanding what kind of function it is, let’s see the function graph for x=10:

By applying Property N.4, after appropriate considerations about the monotonicity and the integrability of this function on \overline{I} = \overline{\{1, \ldots, x\}} = [1, x + 1) and on \underline{I} = \underline{\{1, \ldots, x\}} = (0, x], we can conclude that:

- for all n \in \{1, \ldots, x\}, \log \left(\frac{x}{n} \right) \geq \int_n^{n+1} g_x(t) dt = \int_n^{n+1} \log \left(\frac{x}{t} \right) dt (by (5))

- for all n \in \{2, \ldots, x\}, \log \left(\frac{x}{n} \right) \leq \int_{n-1}^{n} g_x(t) dt = \int_{n-1}^{n} \log \left(\frac{x}{t} \right) dt (by (7))

What considerations are necessary in order to apply Property N.4 to the function g_x?

In order to apply Property N.4 to the function g_x, we have to make some considerations:

- The domain of the extension \overset{\sim}{g_x}, (0, +\infty), includes both \overline{I} = [1, x + 1) and \underline{I} = (0, x]

- Both on \overline{I} and \underline{I}, the function \overset{\sim}{g_x} is decreasing. In fact, as t varies between 1 and x + 1, the function \frac{x}{t} decreases from x to \frac{x}{x + 1} \lt 1, and consequently also \overset{\sim}{g_x}(t) = \log \left(\frac{x}{t}\right) decreases, from \log(x) to \log \left( \frac{x}{x + 1} \right) \lt 0. Similarly, as t varies between 0 (excluded) and x, \frac{x}{t} decreases from +\infty (excluded) to 1, and also \overset{\sim}{g_x}(t) = \log\left(\frac{x}{t}\right) decreases from +\infty (excluded) to \log(1) = 0.

- Being \overset{\sim}{g_x} decreasing both in \overline{I} and in \underline{I}, we could apply formulas (5) and (7); however, while \overset{\sim}{g_x} is integrable in all \overline{I}, we can’t say the same for \underline{I}, because as t \to 0, \overset{\sim}{g_x} \to +\infty, hence \overset{\sim}{g_x} is not integrable in (0, 1] (it’s improperly integrable, but this is a matter which should be examined in depth apart), so (7) cannot be applied for n = 1. But there are no problems in applying (7) for n \in \{2, \ldots, x\}, provided that x \geq 2, a condition we can set without influencing our conclusions.

Let’s develop the second inequality:

Remembering that (8) is valid for all n \in \{2, \ldots, x\}, we can sum up member by member, obtaining:

The introduction of integrals has been essential, as it lets us develop the last expression with the technique of variable substitution, letting u := \frac{x}{t}:

Letting u := \frac{x}{t}, we have that t = \frac{x}{u} and so dt = -\frac{x}{u^2} du. Moreover, as t varies between 1 and x, u varies, conversely, between \frac{x}{1} = x and \frac{x}{x} = 1 (for obtaining this symmetrical behaviour, it has been essential to sum up integrals, in the passage from (8) to (9)). Substituting into the integral of (9), we have that

At this point we can note that the integral on the right is a constant:

Noting that the integrand is positive and passing to the limit, we can write:

By the integral test for convergence, being the integrand positive and decreasing, the integral converges if and only if the associated series does. So let’s examine the associated series:

It’s a modified p-series, that converges, being the exponent of n at the denominator greater than 1. So, by the integral test, also the integral \int_1^{+\infty} \frac{\log u}{u^2} du converges, i.e. it’s equal to a real constant. Then, by (12), it’s even more true that also the starting integral \int_1^x \frac{\log u}{u^2} du is a real constant.

Joining formulas (9), (10) and (11), we have that

And so, in particular, considering the sum \sum_{n=2}^x \log \left(\frac{x}{n} \right) as a function of x, we can apply the Big oh notation writing that:

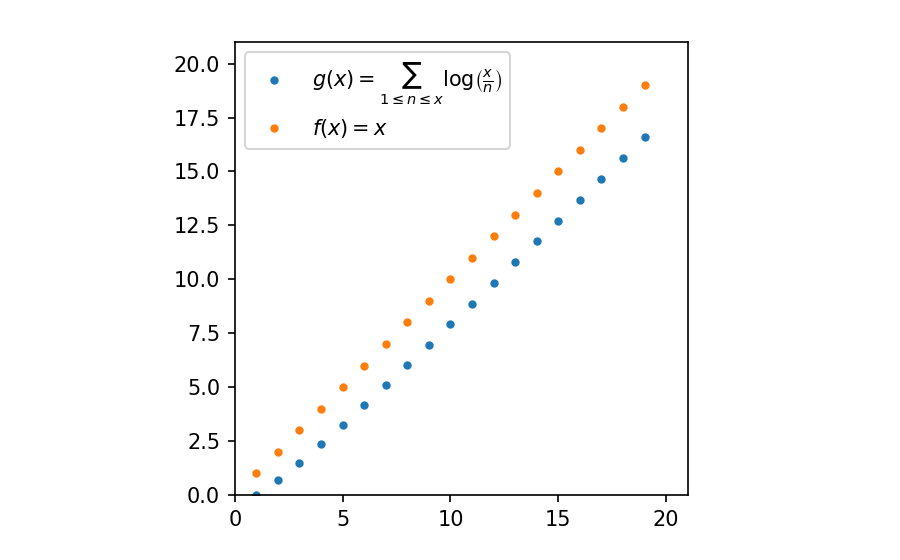

At this point, nothing changes if we add the term obtained for n = 1, that was discarded for technical reasons, obtaining the following function g: \mathbb{N}^{\star} \Rightarrow \mathbb{R}:

for which we have that:

where the last passage takes into account that \log x is definitively smaller than x.

So we have proved the following Lemma:

Order of magnitude of the sum of a fraction logarithms when the denominator changes

However this result is quite clear from the graph of the function g:

We can note that in the proof of Lemma N.6 nothing would change if, in place of the \log function, we used the \log^h function, for any real exponent h \gt 0. In fact by the same passages you can prove the following generalized form:

Order of magnitude of the sum of a fraction logarithms when the denominator changes, generalized form

Let h \in \mathbb{R}, h \gt 0. Then:

Now it’s quite natural that you ask yourself where the function g comes from: why have we studied just this function? We’ll see the answer in the next post.